I haven’t been paying much attention to the 2023 election cycle, other than getting annoyed at all the silly polls for next...

I haven’t been paying much attention to the 2023 election cycle, other than getting annoyed at all the silly polls for next...

Read More

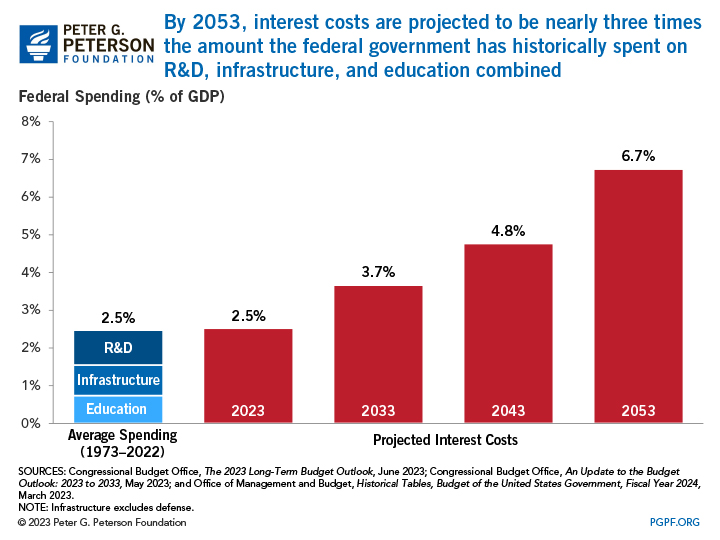

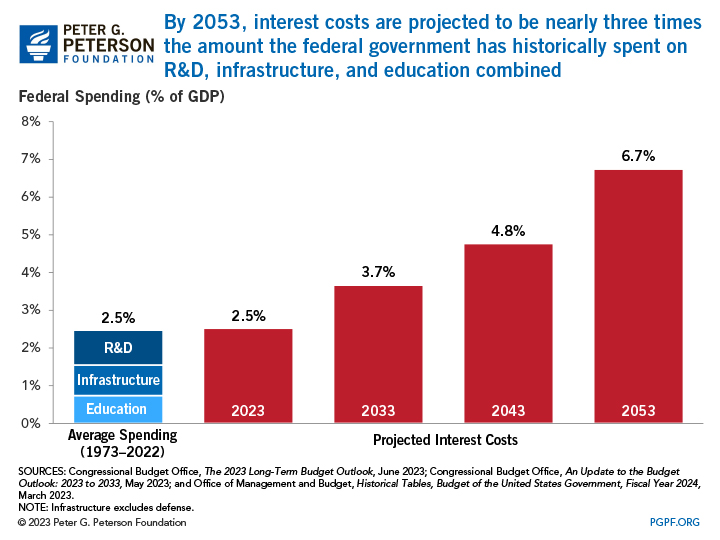

The chart in this morning’s reads shows what it is going to cost to fund the interest payments on the federal debt....

The chart in this morning’s reads shows what it is going to cost to fund the interest payments on the federal debt....

Read More

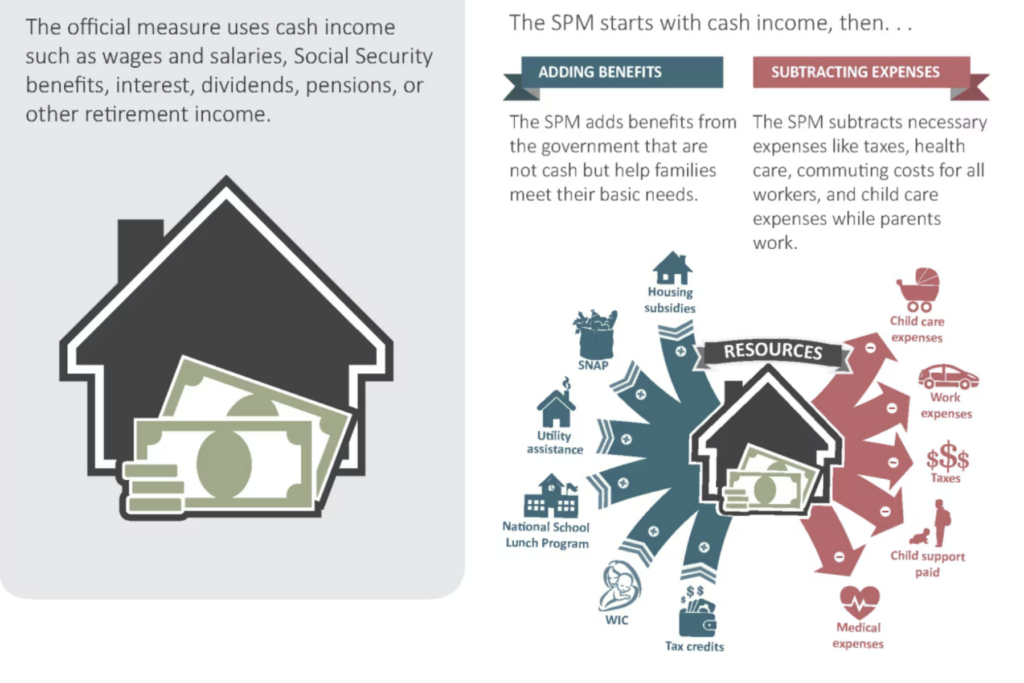

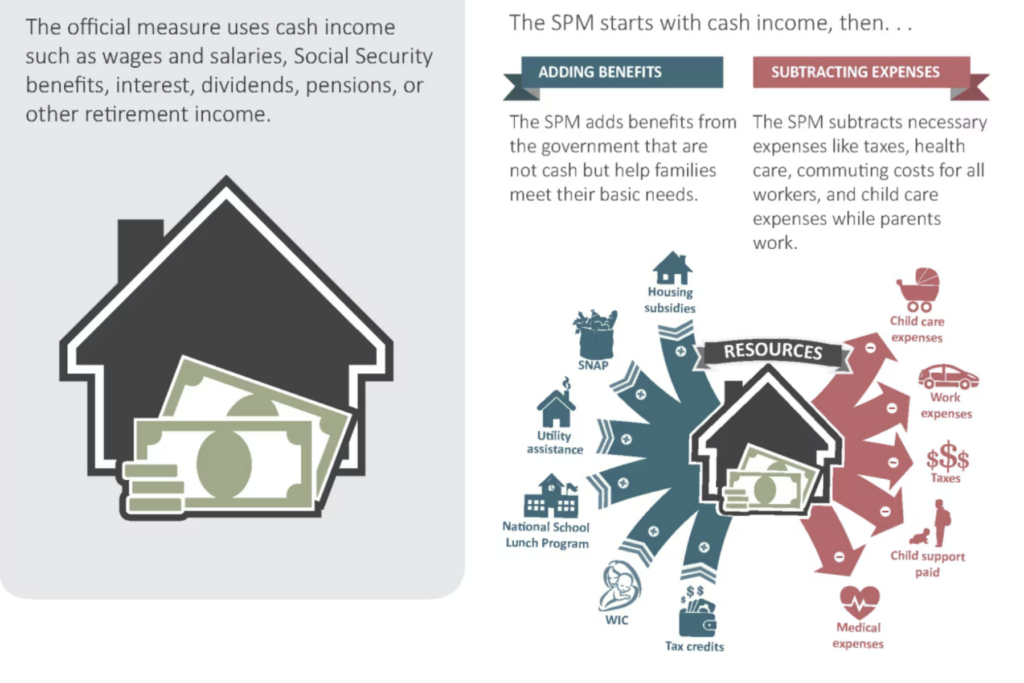

@TBPInvictus here; Let’s cut to the chase: “America’s Enormous Math Mistake” is a popular video on YouTube...

@TBPInvictus here; Let’s cut to the chase: “America’s Enormous Math Mistake” is a popular video on YouTube...

Read More

“Magazine covers, especially the Economist, are wonderful contrary indicators.” –@Trendsandtailrisks...

“Magazine covers, especially the Economist, are wonderful contrary indicators.” –@Trendsandtailrisks...

Read More

@TBPInvictus here: I want to avoid the usual “false equivalencies” and “both sides do it” approach, and ask a...

@TBPInvictus here: I want to avoid the usual “false equivalencies” and “both sides do it” approach, and ask a...

Read More

The Bull Stock Market Needs to Give Credit to the Calendar Returns this year look stellar, but only because equities collapsed the last...

Read More

Very interesting listen by data scientist Cathy O’Neil about her book, Weapons of Math Destruction, which describes the dangers of...

Read More

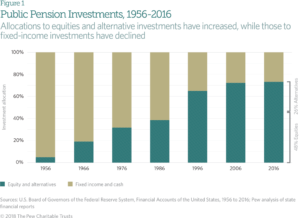

Gene Fama’s discussion earlier this week made me revisit some ideas on outperformance and the zero sum game. In particular, the...

Gene Fama’s discussion earlier this week made me revisit some ideas on outperformance and the zero sum game. In particular, the...

Read More

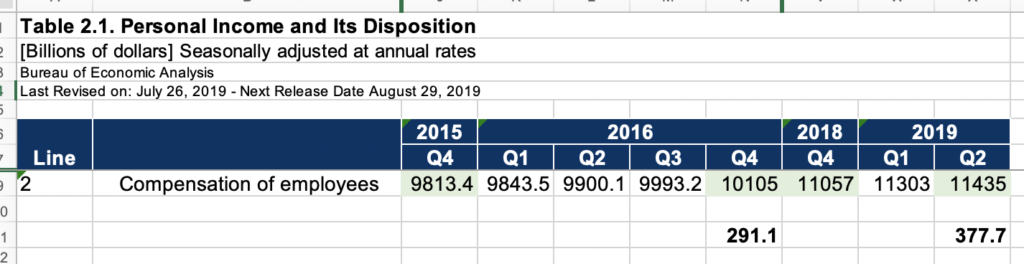

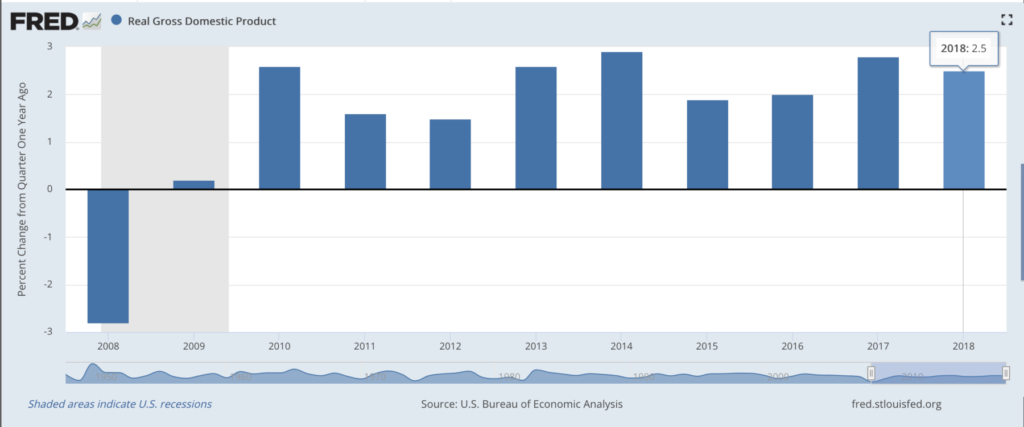

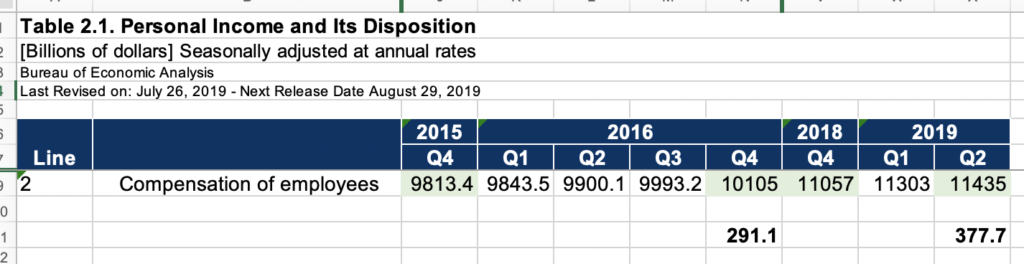

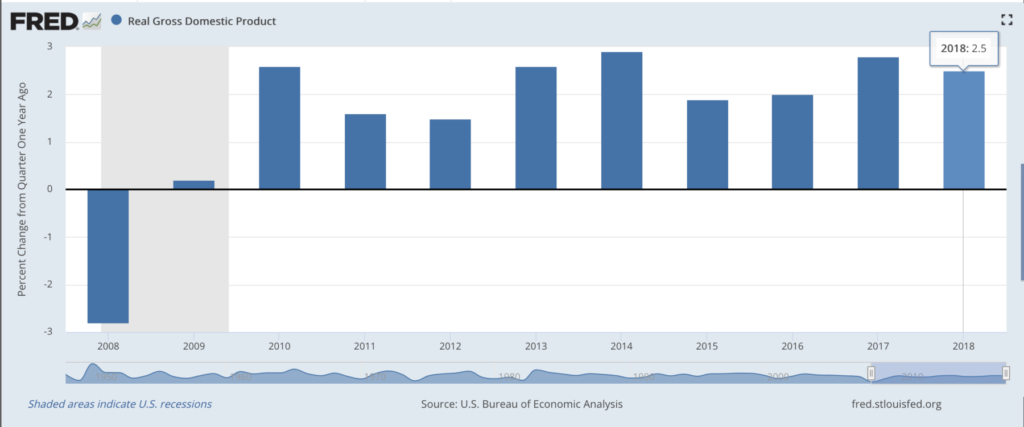

@TBPInvictus here. Real GDP Percent Change from Quarter One Year Ago, Annually, End of Period Source: FRED The Bureau of...

@TBPInvictus here. Real GDP Percent Change from Quarter One Year Ago, Annually, End of Period Source: FRED The Bureau of...

Read More

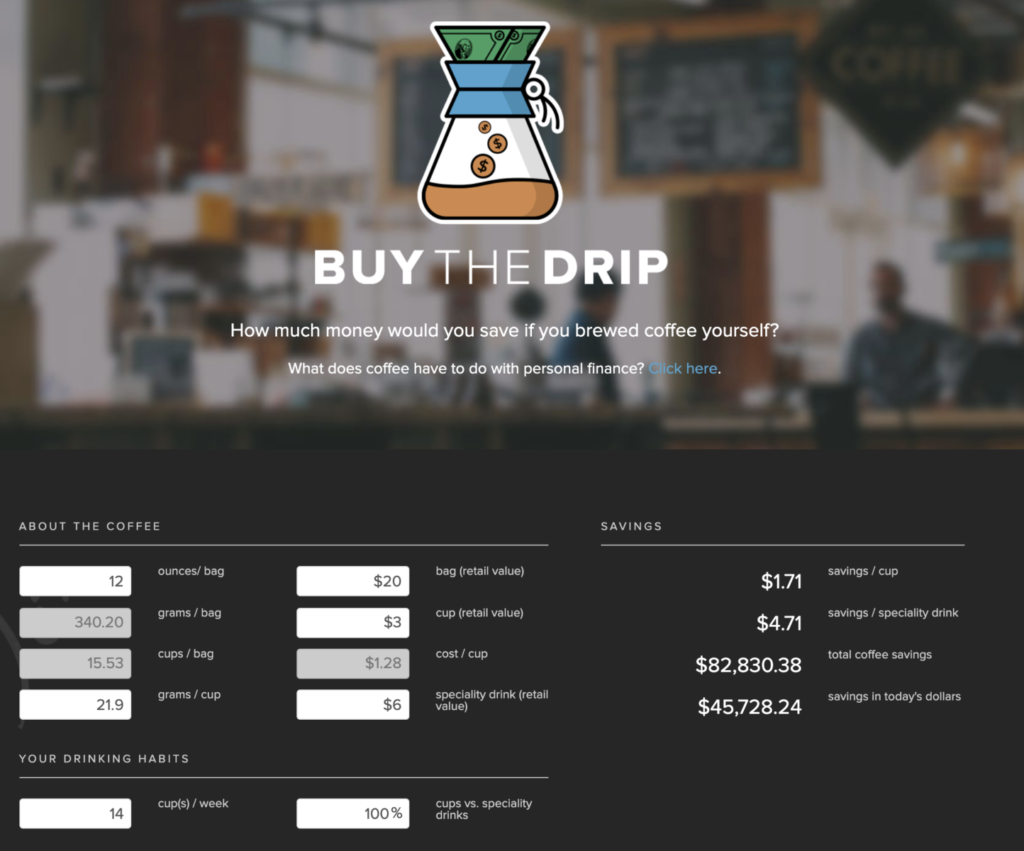

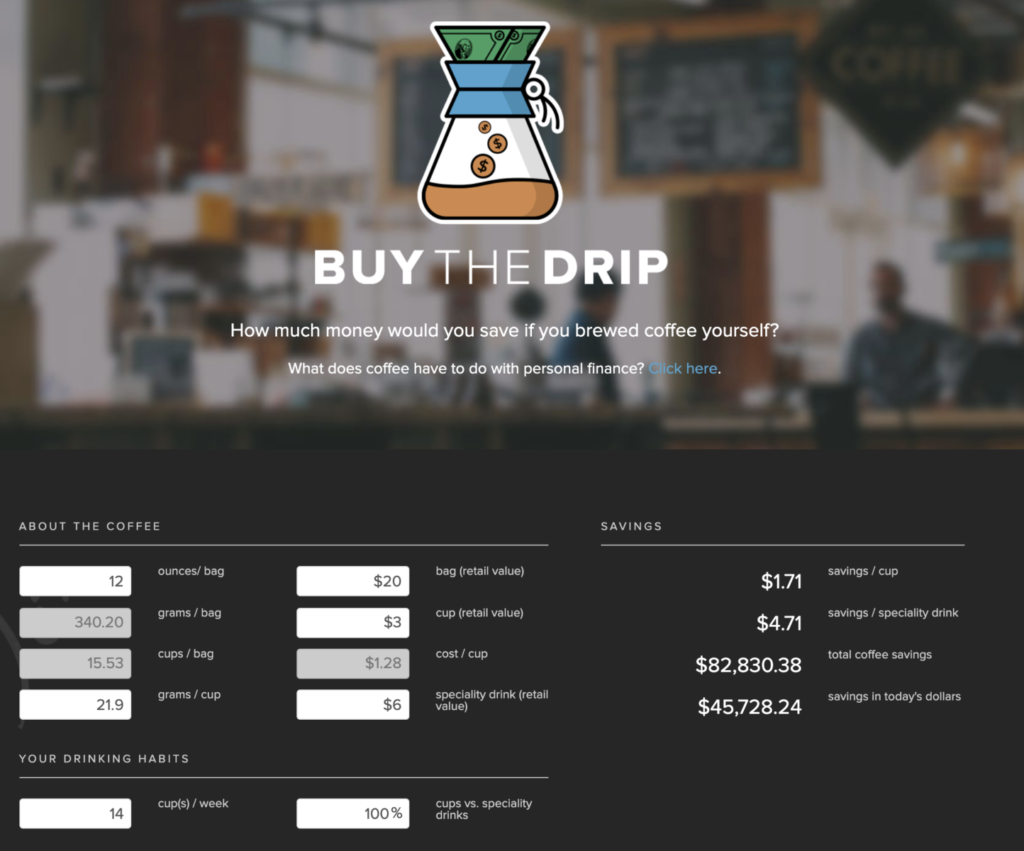

Its a Summer Friday — a perfect time for something I have been meaning to get to posting on: Buy the Drip! (Kashana Cauley post...

Its a Summer Friday — a perfect time for something I have been meaning to get to posting on: Buy the Drip! (Kashana Cauley post...

Read More

I haven’t been paying much attention to the 2023 election cycle, other than getting annoyed at all the silly polls for next...

I haven’t been paying much attention to the 2023 election cycle, other than getting annoyed at all the silly polls for next...

I haven’t been paying much attention to the 2023 election cycle, other than getting annoyed at all the silly polls for next...

I haven’t been paying much attention to the 2023 election cycle, other than getting annoyed at all the silly polls for next...