Salil Mehta is a two-time Administration executive, leading Treasury/TARP’s analytics team, as well as PBGC’s policy, research, and analysis, as well as their first risk analysis function. Salil is the creator of the popular free statistics blogs, Statistical Ideas.

~~~

In a disturbing risk report from University of Oxford scientists and non-profit policy minds, people are 5 times more likely to die of a human-extinction event versus a car crash. The report has been influential with governments. However it is also full of so many egregious probability blunders that overwhelmingly negate their analysis, a blog article here was warranted to level-set the public. Our blog has often studied the weighty topic of mortality (samples here, here, here,here, here). The Oxford report was brought to my attention by investment head Barry Ritholtz, who was interested (as many are) in our peer-reviewing such headline mortality claims (equivalent in some respects to suggesting a roulette ball has a much higher chance of landing on green if you study the problem in a comical way). There are a number of lessons in here about evaluating the merits of any research, and particularly of journalists who then simply, regularly vocalize such reports and even then find astonishingly new ways to further expand on the underlying report’s errors (asThe Atlantic magazine for example plainly did)!

We’ll focus our attention on five of the most critical subject areas that have significant impact, without laundry listingevery possible issue found (which would be nearly 10). The first issue is that we can not directly compare the mortality of humans today, versus an existential event over the next 100 years. Humans alive today have a conditional life expectancy that varies about the globe. As examples, currently it is 43 additional years for Americans; 37 additional years for Indians. Those are significantly fewer years for one to have a chance of a human-existential risk, versus 100 years. Even starting at birth, the life expectancy varies even more (but still stays firmly below 100 years). For example, 79 years for Americans and 66 years for Indians. One would not absurdly state that having a ball land on a green spoke (<1 in 37) in roulette is more likely versus landing on a red spoke (>18 in 38). However scientists can falsely buttress such claims by mixing time frames, for example misleading comments such as it is likelier for the ball to land on a green spoke over the next several dozen or more spins versus on a red spoke in just the next single spin!

The second issue is the false assumption that the mortality rate for a car crash and for an extinction event are both identically (discrete) uniform and applies to everyone equally. Nothing could be further from the truth, and the topic or mortality functions can be quite advanced (here, here). And this error can be observed when they apply their “.1%” discrete annihilation mortality rate over 100 years to get a 9.5% mortality. The compound actuarial formula that fits this false assumption is:

Survival rate

= (1- annual deaths)years

= (1 – 0.1%)100

= 90.5% (or a failure rate of 100%-90.5%=9.5%)

In reality the mortality function is far more complex for both death reasons. For motor accidents (a component of unintentional deaths) the distribution is heavier in the earlier years where non-natural death causes are lower, and lighter towards the higher-end of life-expectancy where there is a rise in natural cause mortalities, such as cardiovascular disease. In other words even if motors were banished from earth as you reach say 79 years old, it doesn’t imply you have that much longer to live (say to the Oxford report’s kooky 100-year benchmark), since your death is likely imminent from a non-motor related reason anyway.

For human-extinction events the distribution is the exact opposite, since they happen so infrequently (the exact opposite message from their report). The definition of such as event is one that wipes out 15% or more of the world’s population. The problem the scientists have discovered is that for most of the past two millenniums, there have only been two instances of this (the last one was 700 years ago!) Two instances in the 2000 years means that every century has less than a 10% chance of containing a human extistential event. The sum of 1-event probability is 2*1/20*19/20 and 2-events probability is 1/20*1/20 (see here, here, here, here for more on convolution theory).

The result of such low frequency events have been two-fold. The first is that the Oxford scientists have artificially eased up on their definition of a human-existence event by lowering the threshold of deaths to say a few percent (from >15%) and even combined events together (e.g., flus and war) or reduced “the World” to a smaller civilization such as only Aztecs. This is all cheating! The second result is that such a low frequency means that events don’t typically happen during the course of a century, but rather once every 300 or so years. The direct impact of this is that the mortality function over the next century (at any point in time) is far from uniform, but rather ramps higher similar to a Poisson distribution. We need now to both look for lower mortality in the initial years, but also based on the first flaw at the start of the article, look at less years than 100 to begin with! Making both adjustments implies that a .1% mortality rate would actually be .02%. The latter was derived from taking the .1% and applying adjustments of 1/3 for ramp-up in low frequency and 2/3 for lower-life expectancy than 100 years. In other words these alone should lower their human extinction mortality by a factor of 5, then equaling that which they suggest for a car crash!

A third issue is that one can not simply take a rare catastrophic event that centuries go by between them, and then simply insert it as they attempted to do into the “death cause” mix without carefully drawing down other existing death causes. For actuarial scientists and life insurance strategists, there are four mortality risks to consider. Trends in mortality, the level of mortality, volatility in the mortality estimate, and catastrophic and unexpected shifts to the entire curve. In this article we are dealing with this last and most difficult to deal with risk, particularly when separating it from the other three primary risks..

But there are a couple other issues with the work still. So the fourth issue is that they (and the independently and puzzlingly the journalist at The Atlantic) rounded up the mortality to the nearest one significant-digit! Any reasonable professional knows that this is mathematically treacherous. Even more so when that single digit is a “1”. See this table below.

What we see is that forced rounding to a single digit is far more problematic when extrapolated over 100 years (something both the Oxford scientists and The Atlantic journalist independently did). We don’t expect the journalists to be at this high level of probability and math skill, but we do expect that items be fact-checked before such egregious errors propagate. And also a surprise to some, we also see that the 100-year extrapolation creates a lager error when committed when that single digit is low such as “1” (see right-most column of orange color rows above). As opposed to when that single digit is much higher such as “9” (see right-most column of green color rows above).

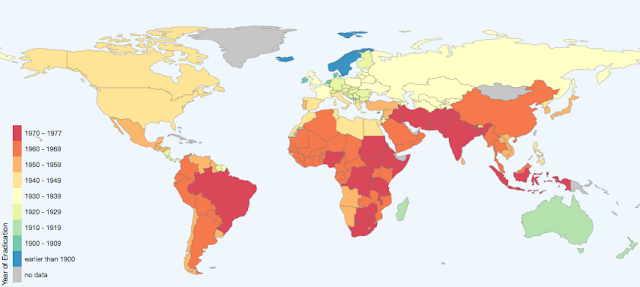

The fifth issue with their research is that they point to purposefully rare hypothesize to back into their probabilities they seek. For example a 6 degree Celsius aberration in global temperatures. Now sure this seems frightening. But this implies a continuous annual temperature climb of 0.06 degrees. As we show in our global glowing article, this is a flimsy idea. Temperatures have been (similar to not everyone about the globe drives and disease longevity patterns are amuck, the latter shown by scientist Max Roser and WHO below for smallpox) heterogeneous about the globe. But overall it has risen annually about 0.1 degree Fahrenheit (which amounts to annual changes of 5/9*0.1 degrees or 0.06 degrees Celsius). The only problem is that the 0.06 degrees annual warmth is a result of a recent spike in warming, one that is not expected to simply continue unrelentingly. Temperatures have plenty of room to behave as they also have over their broader history, by either being flat or simply cooling.

So as we see from the five issues that were just now raised, we see even university science papers mixed with policy initiatives can lead to often imperfect results. Telling a story with sloppy math hurts the evidence and the progress that can be believed.

Unrelated: Recent lottery article enjoyed by Tom Keene (star editor at Bloomberg Television), and others in the media such as Barry Ritholtz (Bloomberg), Michael Kitces, Spencer Jakab (WSJ), Abnormal Returns and Zero Hedge. Additionally a recent article in Zero Hedge utilizing one of our chart analysis was one of their top articles for the day (>100 thousand reads)! Finally, a reminder we can now be followed on Twitter (@salilstatistics).